The WHY

Getting familiar with the basics of data analytics can be a daunting task. With so many buzz words flying around and different technologies involved often folks get confused and takes time get the basics right.

This workshop was designed to demystify starting with data analytics & data quality for:

-

- Engineers willing to begin with data analytics

-

- Business owners / Managers working with data analytics & want to understand how things work under the hood

The WHAT

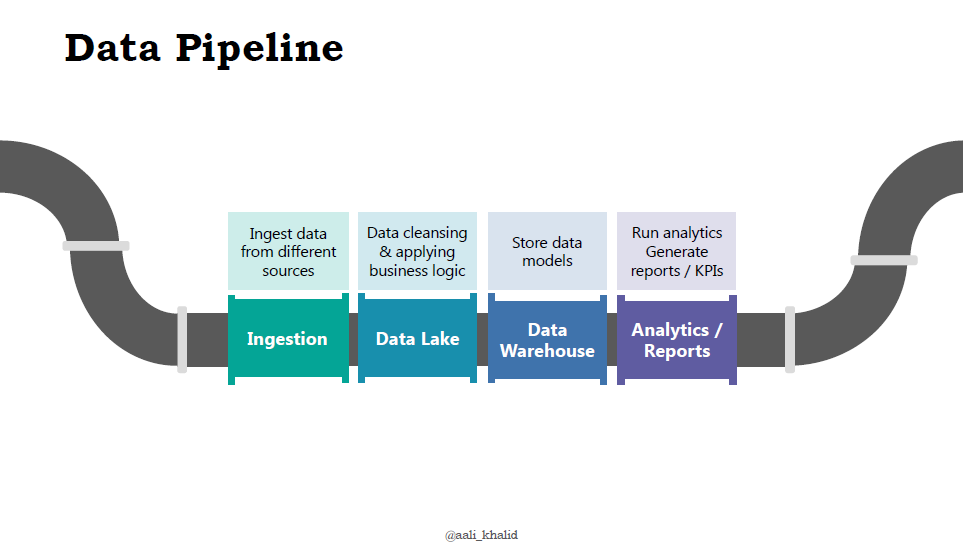

Before we talk about data quality, it was important to give an into to big data, data pipelines and all the stages across the pipeline.

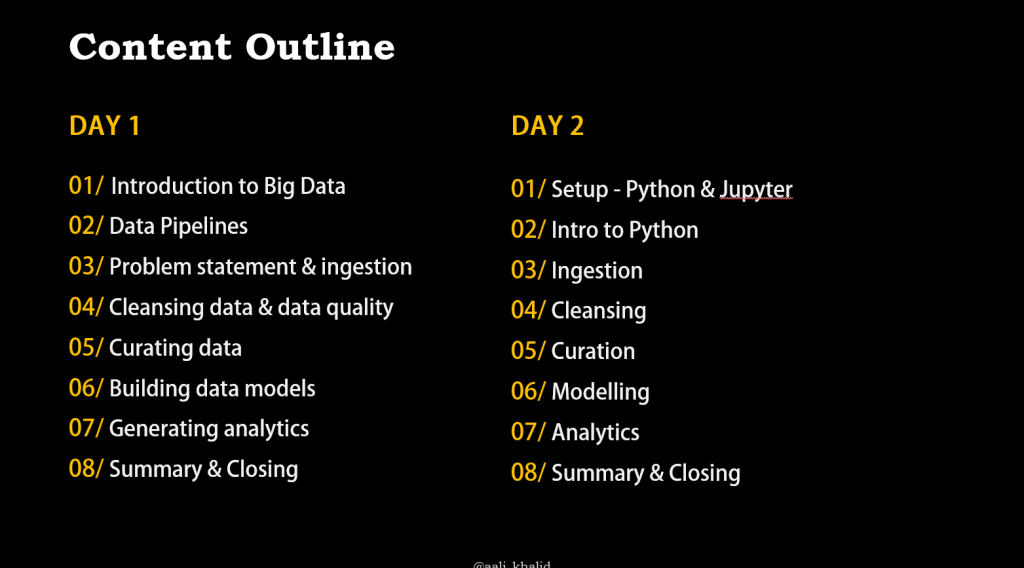

Across the 2 hour sessions on two days, we first discussed the:

-

- Fundamentals of data

-

- What are data pipelines

-

- Common activities at each stage of the pipeline

-

- Introduction to data quality

-

- Sample data quality activities at each stage in the pipeline

The first day was mostly to get the basics & internalize the activities at each stage without going more technical into the code

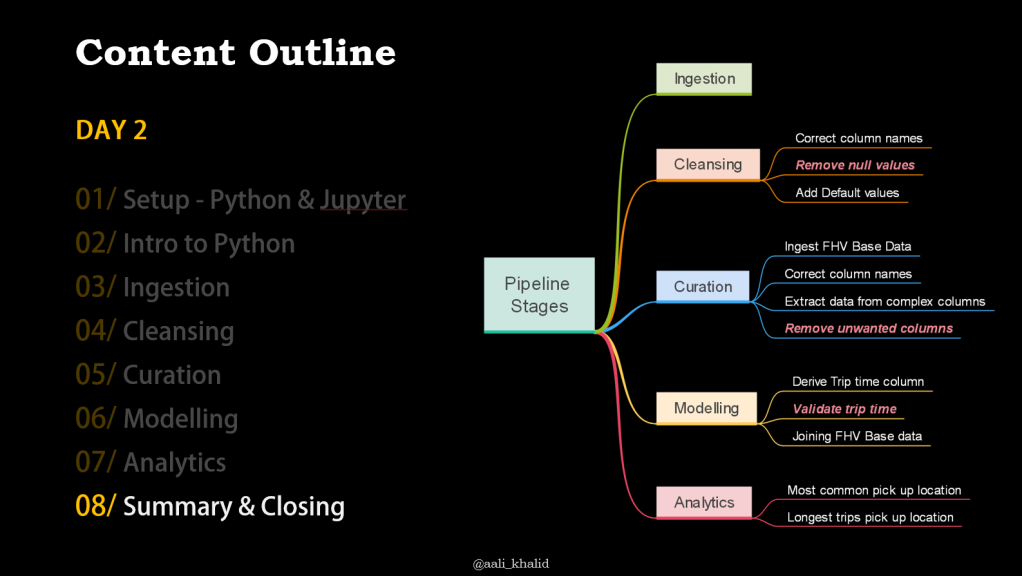

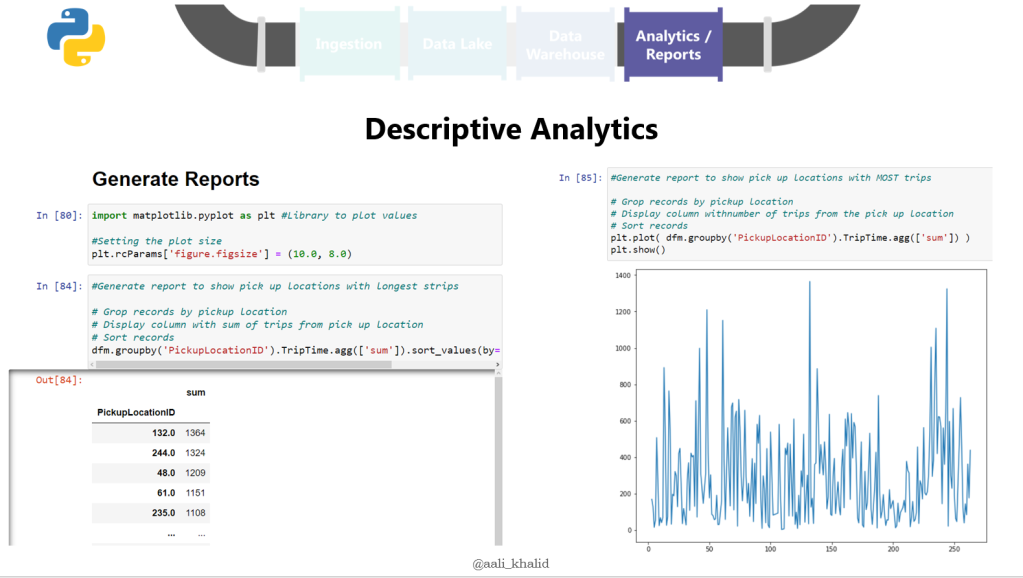

Once folks had an idea of what needs to happen at each stage, on the second day participants went about practically implementing all stages in the pipeline.

The HOW

This was tricky when I was planning the workshop. With most workshops, after some basic theory participants jump onto coding. I’ve always found that to be a rough transition which makes it hard for participants to follow along with, especially if they are working not writing code on a daily basis.

Day 1

Therefore, the first day was to understand the concepts, the secret was not just death by PowerPoint – but participants actually performing the steps across the pipeline / but not with code. To make life easy, we did that with excel – no tooling knowledge required – pure focus on understanding the WHY of each activity!

Day 2

The second day was all about coding with baby steps. We started from:

-

- Learning the basics of working with notebooks,

-

- Intro to coding in python and then

-

- Ingested different types of data sources

-

- Curation activities like flattening data structures

-

- Creating derived columns & combining data sets to build a basic data model

The code was quite a bit to go over, but was designed in such a way that participants can easily follow along afterwards by providing ample documentation within the code.

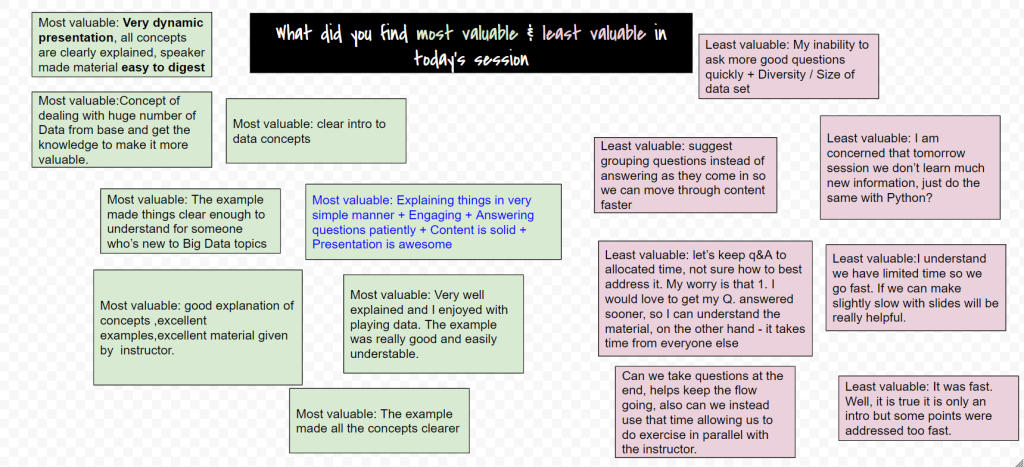

The Feedback

The content we covered was A LOT, I think could have easily been done in 6 hours instead of 4. It was a toss between dropping content and trying to cover more in less time.

That’s where my personality kicks in – I have a hard time cutting down on content – because I feel I need to share what I know, I’ve experienced the struggle of learning this – and hope people who learn from me don’t have to struggle as much.

“Explaining things in a very simple manner, Engaging answering questions patiently – Content is Solid and awesome presentation”

Participant feedback